#include <itkStandardStochasticVarianceReducedGradientDescentOptimizer.h>

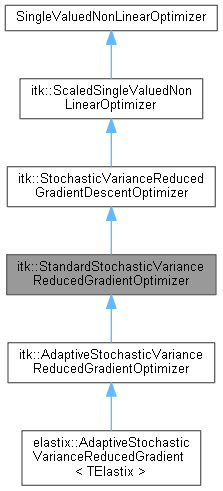

This class implements a gradient descent optimizer with a decaying gain.

If

![\[ x(k+1) = x(k) - a(k) dC/dx \]](form_78.png)

The gain

![\[ a(k) = a / (A + k + 1)^alpha \]](form_79.png)

.

It is very suitable to be used in combination with a stochastic estimate of the gradient

NewSamplesEveryIteration to "true" to achieve this effect. For more information on this strategy, you may have a look at:

S. Klein, M. Staring, J.P.W. Pluim, "Evaluation of Optimization Methods for Nonrigid Medical Image Registration using Mutual Information and B-Splines" IEEE Transactions on Image Processing, 2007, nr. 16(12), December.

This class also serves as a base class for other StochasticVarianceReducedGradient type algorithms, like the AcceleratedStochasticVarianceReducedGradientOptimizer.

Definition at line 56 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

Static Public Member Functions | |

| static Pointer | New () |

| Static Public Member Functions inherited from itk::StochasticVarianceReducedGradientDescentOptimizer | |

| static Pointer | New () |

| Static Public Member Functions inherited from itk::ScaledSingleValuedNonLinearOptimizer | |

| static Pointer | New () |

Protected Member Functions | |

| virtual double | Compute_a (double k) const |

| virtual double | Compute_beta (double k) const |

| StandardStochasticVarianceReducedGradientOptimizer () | |

| virtual void | UpdateCurrentTime () |

| ~StandardStochasticVarianceReducedGradientOptimizer () override=default | |

| Protected Member Functions inherited from itk::StochasticVarianceReducedGradientDescentOptimizer | |

| void | PrintSelf (std::ostream &os, Indent indent) const override |

| StochasticVarianceReducedGradientDescentOptimizer () | |

| ~StochasticVarianceReducedGradientDescentOptimizer () override=default | |

| Protected Member Functions inherited from itk::ScaledSingleValuedNonLinearOptimizer | |

| virtual void | GetScaledDerivative (const ParametersType ¶meters, DerivativeType &derivative) const |

| virtual MeasureType | GetScaledValue (const ParametersType ¶meters) const |

| virtual void | GetScaledValueAndDerivative (const ParametersType ¶meters, MeasureType &value, DerivativeType &derivative) const |

| void | PrintSelf (std::ostream &os, Indent indent) const override |

| ScaledSingleValuedNonLinearOptimizer () | |

| void | SetCurrentPosition (const ParametersType ¶m) override |

| virtual void | SetScaledCurrentPosition (const ParametersType ¶meters) |

| ~ScaledSingleValuedNonLinearOptimizer () override=default | |

Protected Attributes | |

| double | m_CurrentTime { 0.0 } |

| bool | m_UseConstantStep {} |

| Protected Attributes inherited from itk::StochasticVarianceReducedGradientDescentOptimizer | |

| unsigned long | m_CurrentInnerIteration {} |

| unsigned long | m_CurrentIteration { 0 } |

| DerivativeType | m_Gradient {} |

| unsigned long | m_LBFGSMemory { 0 } |

| double | m_LearningRate { 1.0 } |

| ParametersType | m_MeanSearchDir {} |

| unsigned long | m_NumberOfInnerIterations {} |

| unsigned long | m_NumberOfIterations { 100 } |

| DerivativeType | m_PreviousGradient {} |

| ParametersType | m_PreviousPosition {} |

| ParametersType | m_PreviousSearchDir {} |

| ParametersType | m_SearchDir {} |

| bool | m_Stop { false } |

| StopConditionType | m_StopCondition { MaximumNumberOfIterations } |

| MultiThreaderBase::Pointer | m_Threader { MultiThreaderBase::New() } |

| double | m_Value { 0.0 } |

| Protected Attributes inherited from itk::ScaledSingleValuedNonLinearOptimizer | |

| ScaledCostFunctionPointer | m_ScaledCostFunction {} |

| ParametersType | m_ScaledCurrentPosition {} |

Private Attributes | |

| double | m_InitialTime { 0.0 } |

| double | m_Param_A { 1.0 } |

| double | m_Param_a { 1.0 } |

| double | m_Param_alpha { 0.602 } |

| double | m_Param_beta {} |

Additional Inherited Members | |

| Protected Types inherited from itk::StochasticVarianceReducedGradientDescentOptimizer | |

| using | ThreadInfoType = MultiThreaderBase::WorkUnitInfo |

| using itk::StandardStochasticVarianceReducedGradientOptimizer::ConstPointer = SmartPointer<const Self> |

Definition at line 66 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

| using itk::StandardStochasticVarianceReducedGradientOptimizer::Pointer = SmartPointer<Self> |

Definition at line 65 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

| using itk::StandardStochasticVarianceReducedGradientOptimizer::Self = StandardStochasticVarianceReducedGradientOptimizer |

Standard ITK.

Definition at line 62 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

| using itk::StandardStochasticVarianceReducedGradientOptimizer::Superclass = StochasticVarianceReducedGradientDescentOptimizer |

Definition at line 63 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

Codes of stopping conditions The MinimumStepSize stop condition never occurs, but may be implemented in inheriting classes

Definition at line 81 of file itkStochasticVarianceReducedGradientDescentOptimizer.h.

|

protected |

|

overrideprotecteddefault |

|

overridevirtual |

Sets a new LearningRate before calling the Superclass' implementation, and updates the current time.

Reimplemented from itk::StochasticVarianceReducedGradientDescentOptimizer.

|

protectedvirtual |

Function to compute the step size for SGD at time/iteration k.

|

protectedvirtual |

Function to compute the step size for SQN at time/iteration k.

|

virtual |

Get the current time. This equals the CurrentIteration in this base class but may be different in inheriting classes, such as the AccelerateStochasticVarianceReducedGradient

|

virtual |

|

virtual |

|

virtual |

|

virtual |

|

virtual |

| itk::StandardStochasticVarianceReducedGradientOptimizer::ITK_DISALLOW_COPY_AND_MOVE | ( | StandardStochasticVarianceReducedGradientOptimizer | ) |

| itk::StandardStochasticVarianceReducedGradientOptimizer::itkOverrideGetNameOfClassMacro | ( | StandardStochasticVarianceReducedGradientOptimizer | ) |

Run-time type information (and related methods).

|

static |

Method for creation through the object factory.

|

inlinevirtual |

Set the current time to the initial time. This can be useful to 'reset' the optimisation, for example if you changed the cost function while optimisation. Be careful with this function.

Definition at line 125 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

|

virtual |

Set/Get the initial time. Should be >=0. This function is superfluous, since Param_A does effectively the same. However, in inheriting classes, like the AcceleratedStochasticVarianceReducedGradient the initial time may have a different function than Param_A. Default: 0.0

|

virtual |

Set/Get A.

|

virtual |

Set/Get a.

|

virtual |

Set/Get alpha.

|

virtual |

Set/Get beta.

|

override |

Set current time to 0 and call superclass' implementation.

|

protectedvirtual |

Function to update the current time This function just increments the CurrentTime by 1. Inheriting functions may implement something smarter, for example, dependent on the progress.

Reimplemented in itk::AdaptiveStochasticVarianceReducedGradientOptimizer.

|

protected |

The current time, which serves as input for Compute_a

Definition at line 151 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

|

private |

Settings

Definition at line 164 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

|

private |

Definition at line 160 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

|

private |

Parameters, as described by Spall.

Definition at line 158 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

|

private |

Definition at line 161 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

|

private |

Definition at line 159 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

|

protected |

Constant step size or others, different value of k.

Definition at line 154 of file itkStandardStochasticVarianceReducedGradientDescentOptimizer.h.

Generated on 26-02-2026

for elastix by  1.16.1 (669aeeefca743c148e2d935b3d3c69535c7491e6) 1.16.1 (669aeeefca743c148e2d935b3d3c69535c7491e6) |